2016

Computer Graphics Forum,

2016

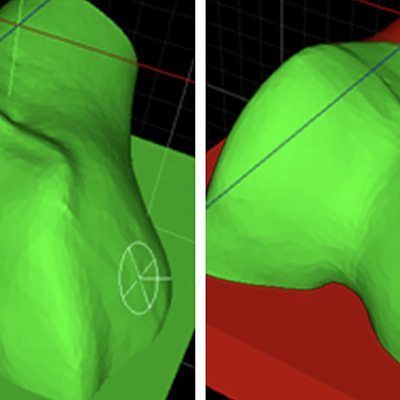

In Proceedings of Visual Computing in Biology and Medicine,

2016

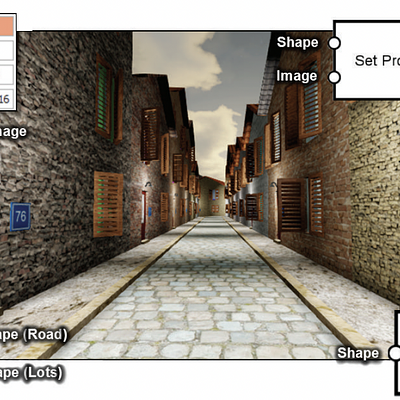

Computer Graphics Forum,

2016

Computer Graphics Forum,

2016

In Proceedings of Vision, Modeling, and Visualization,

2016

Computer Graphics Forum,

2016

2015

In Proceedings of High Performance Graphics,

2015

In Proceedings of ACM Multimedia,

2015

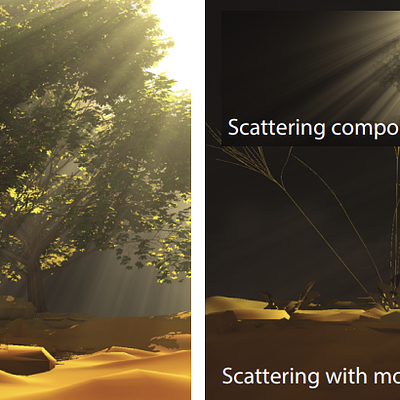

Computer Graphics Forum,

2015

International Journal of Computer Assisted Radiology and Surgery,

2015

In Proceedings of Graphics Interface,

2015

IEEE Transactions on Visualization and Computer Graphics,

2015

In Proceedings of Graphics Interface,

2015

In Proceedings of DATA ANALYTICS 2015, The Fourth International Conference on Data Analytics,

2015

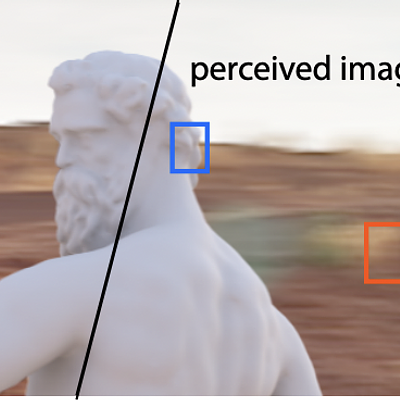

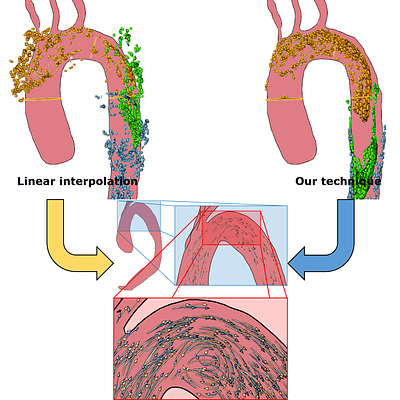

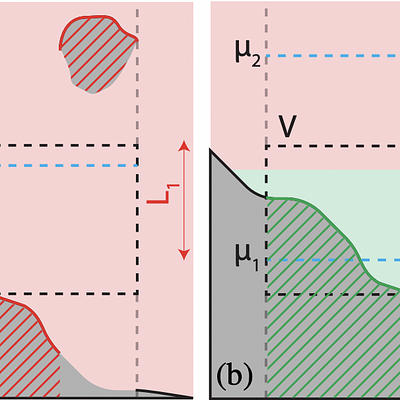

![A complex scene with fine details and global illumination. Left: Images rendered with PBRT [PH10] using 32 samples per pixel rendered in 2.5 minutes. Middle: Image reconstructed by our algorithm in 2.6 minutes including rendering and filtering. Right: Equal error image with 200 samples per pixel rendered in 12.7 minutes.](https://publications.graphics.tudelft.nl/rails/active_storage/representations/redirect/eyJfcmFpbHMiOnsibWVzc2FnZSI6IkJBaHBBczBIIiwiZXhwIjpudWxsLCJwdXIiOiJibG9iX2lkIn19--f3a5f529ef45f02ae2011bf4414f7429114778cc/eyJfcmFpbHMiOnsibWVzc2FnZSI6IkJBaDdCem9MWm05eWJXRjBTU0lJY0c1bkJqb0dSVlE2RTNKbGMybDZaVjkwYjE5bWFXeHNXd2RwQXBBQmFRS1FBUT09IiwiZXhwIjpudWxsLCJwdXIiOiJ2YXJpYXRpb24ifX0=--8786188a9503cffd21c59bbcd5519930c73c621f/bee2015-teaser.png)